AI has quietly become part of how most modern businesses operate. It screens job applicants, approves or rejects loans, flags suspicious transactions, and makes hundreds of other calls that affect real people every single day.

But here’s a question that doesn’t get asked enough — when AI gets it wrong, who actually takes the blame?

It’s not as straightforward as it sounds.

Table of Contents

AI Cannot Be Held Responsible — Ever

Let’s start with the most common misunderstanding. A lot of people assume that as AI becomes more “intelligent,” it should eventually be able to own its mistakes. That’s not how the law sees it — not today, and not anytime soon.

AI is a tool. A very sophisticated one, sure, but a tool nonetheless. It has no legal standing, no rights, and no obligations. You can’t sue an algorithm. You can’t hold a machine accountable in court.

So no matter how independently an AI system seems to be operating, the responsibility always traces back to the humans and organizations behind it. The technology doesn’t absorb blame — it just shifts the question of which human is responsible.

The Business Using AI Is on the Hook First

If your company is using an AI system to make decisions, your company is responsible for those decisions. Full stop.

You can’t point at the software and say “it wasn’t us, the AI did it.” Courts and regulators don’t accept that. If your AI tool discriminates against someone in a hiring process, denies a legitimate insurance claim, or causes financial harm — your organization is accountable.

This also means businesses are expected to actually understand the tools they’re deploying. Saying “we didn’t know how it worked” isn’t a defense. If anything, it makes things worse, because it suggests a lack of due diligence.

What About the Companies That Build AI?

The businesses using AI aren’t always the only ones who can be held liable. The developers and vendors who build these systems can share responsibility too — depending on what went wrong.

If an AI produces biased or harmful outcomes because of bad training data, flawed code, or poor design choices, the people who built it could be pulled into the legal picture as well.

That said, a lot of this comes down to contracts. The agreement between a business and its AI provider often determines who’s responsible for what if things go sideways. Vague or poorly written contracts can create messy situations where everyone points fingers and no one is clearly liable. Getting those terms right matters more than most companies realize.

Humans Still Need to Stay in the Loop

One thing regulators keep coming back to — no matter how good AI gets, human oversight is non-negotiable, especially when the stakes are high.

Financial regulators, for example, have been clear that AI systems in banking and finance need to have meaningful human control built in. That means someone with actual authority needs to be able to review what the AI is doing, question its outputs, and override decisions when something doesn’t look right.

This isn’t just a compliance checkbox. It’s genuinely good risk management. When humans stay involved, errors get caught earlier and accountability is easier to establish.

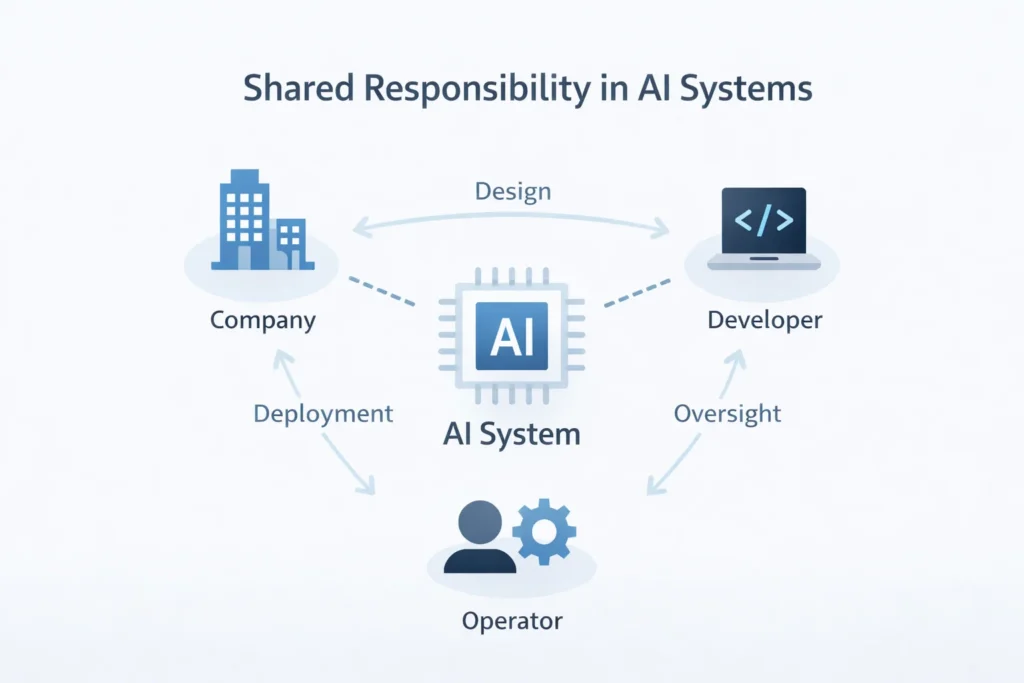

Often, Multiple Parties Share the Blame

Real-world AI failures rarely have a single villain. These systems pass through many hands — a developer builds it, a vendor sells it, a business deploys it, and an operator runs it day to day.

When something goes wrong, liability can spread across all of them depending on each party’s role.

A developer might be responsible for a technical flaw in the system. A company might be responsible for deploying it in a context it wasn’t designed for. An operator might be responsible for using it carelessly. Courts and regulators look at all of these layers.

This makes AI accountability genuinely complex — but it also makes it more honest. In most cases, these failures aren’t caused by one bad decision. They’re the result of several people not doing enough.

The Transparency Problem

Here’s one of the bigger headaches with AI accountability: a lot of these systems are hard to explain, even to the people running them.

Many businesses have admitted they struggle to trace exactly how their AI systems reach specific decisions. And if you can’t explain how a decision was made, it becomes very difficult to figure out where it went wrong — or who should have stopped it.

This is a real legal and operational problem. As AI systems get more complex, this challenge is only going to grow. Businesses that invest in explainable AI — systems designed to show their reasoning — will be in a much stronger position when questions arise.

Also Read: Debris Hits Oracle Building in Dubai Internet City, No Injuries Reported

Governance Isn’t Optional

Companies serious about using AI responsibly need clear internal frameworks for managing it. That means written policies, regular audits, defined roles, and documented processes.

Good AI governance isn’t just about avoiding lawsuits. It also builds genuine trust — with customers who want to know their data is being used fairly, and with regulators who want to see that someone is paying attention.

Organizations that treat governance as an afterthought tend to find out the hard way why it matters.

The Legal Landscape Is Still Being Written

Here’s the honest reality — AI-specific laws are still catching up. Most countries don’t yet have dedicated AI legislation. Instead, regulators are applying existing laws around data privacy, consumer protection, and product liability to AI-related situations.

This creates a patchwork of rules that businesses have to navigate carefully, often without clear precedent to guide them.

That’s changing, though. Risk-based AI regulations are gaining traction globally, and it’s only a matter of time before the legal framework becomes more defined. Businesses that start building responsible practices now will be far better prepared when those laws land.

The Bottom Line

AI isn’t just a tech issue anymore — it’s a legal and ethical one too. Every time your business lets an AI system make a decision that affects a customer, an employee, or a partner, your organization is taking on responsibility for that outcome.

The companies that understand this — and act accordingly — will be the ones that use AI well. The ones that don’t are taking on risks they probably haven’t fully thought through yet.